Continuous Delivery and ITIL: Change Management

Published 28 November 2010Translations: 한국말

Update: for an example of this strategy applied in a large, regulated company, see this case study from Australia's National Broadband Network. (25 July 2013)

Many large organizations have heavyweight change management processes that generate lead times of several days or more between asking for a change to be made and having it approved for deployment. This is a significant roadblock for teams trying to implement continuous delivery. Often frameworks like ITIL are blamed for imposing these kinds of burdensome processes.

However it's possible to follow ITIL principles and practices in a lightweight way that achieves the goals of effective service management while at the same time enabling rapid, reliable delivery. In this occasional series I'll be examining how to create such lightweight ITIL implementations. I welcome your feedback and real-life experiences.

I'm starting this series by looking at change management for two reasons. Firstly it's often the bottleneck for experienced teams wanting to pursue continuous delivery, because it represents the interface between development teams and the world of operations. Second, it's the first process in the Service Transition part of the ITIL lifecycle, and it's nice to pursue these processes in some kind of order.

Change Management in ITIL

In the ITIL world, there are three kinds of changes: standard, normal, and emergency changes. Let's put emergency changes to one side for the moment, and distinguish between the two other types of changes. Normal changes are those that go through the regular change management process, which starts with the creation of an RFC which is then reviewed, assessed, and then either authorized or rejected, and then (if authorized) planned and implemented.

Standard changes are low-risk changes that are pre-authorized. One of the examples in the ITIL literature is a "low-impact, routine application change to handle seasonal variation" (Service Transition, p48). Each organization will decide the kind of standard changes that they allow, the criteria for a change to be considered "standard", who is allowed to approve them, and the process for managing them - like normal changes, they must still be recorded and approved. However the approval can be made by somebody close to the action - it doesn't need to work its way up the management chain.

Even normal changes can vary in terms of the complexity of the change management process they go through. Major service changes which impact multiple organizational divisions will require a detailed change proposal in addition to the RFC, and will need to be approved by the IT management board. Very high cost or high risk changes will need approval by the business executive board. However low risk changes can be approved by a CAB, or change advisory board.

Often CAB approval can be a lengthy and painful process. However it certainly doesn't need to be. The ITIL v3 Service Transition book specifies both that CAB approval can be performed electronically, and that not everybody in the CAB needs to approve every change (pp58-59). Getting agreement on exactly who needs to authorize a given type of change, and making sure those people know in advance the information they need to decide whether to approve it, can mean a fully compliant authorization process that can be performed in seconds in a just-in-time fashion.

Close collaboration between the CAB and the delivery team is also important in order to make the approval process efficient. For example, my colleague Tom Sulston suggests that if you want to do a release at the end of every iteration, you should invite the relevant members of the CAB to your iteration planning meeting (via conference call if necessary). Thus they can see exactly what is coming down the pipeline, and ask questions and provide feedback to ensure that they are comfortable giving approval when the time comes for release.

We can see that ITIL provides several mechanisms which enable lightweight change management processes:

- Standard changes that are pre-approved.

- CAB approvals that are performed electronically, rather than at face-to-face meetings.

- Arranging in advance which subset of CAB members are required to approve each type of change.

How do we ensure that as many as possible of our changes can use these mechanisms?

Using Continuous Delivery to Reduce the Risk of Change

ITIL follows the common-sense doctrine that each change must be evaluated primarily in terms of both its risk and value to the business. So the best way to enable a low-ceremony change management process is to reduce the risk of each change and increase its value. Continuous delivery does exactly this by ensuring that releases are performed regularly from early on in the delivery process, and ensuring that delivery teams are working on the most valuable thing they could be at any given time, based on feedback from users.

[caption id="attachment_220" align="alignnone" width="550" caption="Figure 1: Traditional delivery"] [/caption]

[/caption]

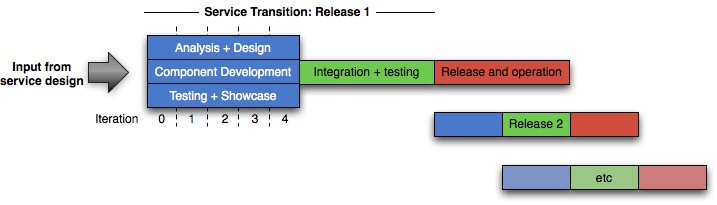

In a traditional delivery lifecycle, even with agile projects, the delivery cadence looks rather like figure 1. The first release can often take some time: for really large projects, years, and even for small projects typically at least six months. Further releases typically occur with a relatively regular rhythm - perhaps every few months for a major release, with a minor release every month.

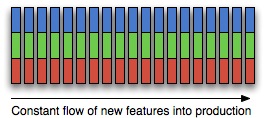

In the world of continuous delivery, and in particular continuous deployment, things look somewhat different. New projects will provision their production environment as part of project inception, and begin releasing to it as early as possible in the delivery process - potentially long before the service is actually made available to users. Further changes are made as frequently as possible, so that in effect there are no subsequent major releases, or indeed minor releases - every further release is a micro release. Project managers will focus on optimizing for cycle time, ensuring that changes to the system get put live as rapidly as possible. This process is shown in figure 2.

[caption id="attachment_220" align="alignnone" width="265" caption="Figure 2: Continuous delivery"] [/caption]

[/caption]

Continuous delivery has several important effects both on the delivery process and on the organization as a whole. First of all, it means operations must work closely with the rest of the delivery team from early on in the project. The combined team must provision the production environment and testing environments, and create automated processes both for managing their configuration and for deploying the nascent application to them as part of the deployment pipeline.

Because operations personnel get to see the application from early on in its development, they can feed their requirements (such as monitoring capabilities) into the development process. Because developers get to see the system in production, they can run realistic load tests from early on and get rapid feedback on whether the architecture of the application will support its cross-functional requirements. This early and iterative release process also exerts a strong pressure to ensure that the system is production ready throughout its lifecycle.

Continuous delivery enables a low-ceremony change management process by ensuring that the first (and riskiest) release is done long before users are given access to the system. Further releases are performed frequently - perhaps as often as several times a day, and certainly no less often than once per iteration. This reduces the delta of change, reducing the risk of each individual release, and making it easier to remediate failed changes. Because continuous delivery mandates that each release must be comprehensively tested in a production-like environment, the risk of each release is further reduced.

I said earlier that continuous delivery ensures that value is more efficiently delivered to the business. But what is the value of releasing so early in the delivery process, long before the anticipated business value of the system can be realized? The value proposition of continuous delivery - keeping systems in a working state throughout their development and operational lifecycle - is threefold. First it allows the customer to see the system, and perhaps show it to selected users, from its earliest stages. This allows the customer much more fine-grained control over what is delivered, and perhaps even to discover more radical changes that need to be made to the service in order to make it even more valuable. Second, incremental releases from earlier on are much less risky than a big-bang release following an integration phase. Finally, it allows project and program managers real data on the progress of the project.

Change Management and the Deployment Pipeline

The deployment pipeline supports an efficient change management process in several ways.

First of all, it provides the mechanism through which changes are evaluated for risk and then applied. Every proposed change to your systems, whether to an application, your infrastructure, your database schema, or your build, test and deployment process itself, should be made via source control. The deployment pipeline then performs automated tests to determine that the change won't break the system, and makes changes that pass the automated tests available to be deployed to manual testing and production environments in a push-button fashion, with appropriate authorization controls.

A good pipeline implementation should be able to provide reports that let you know exactly what has changed since last time you deployed. People approving deployments can use this information, reports from the automated and manual testing that is performed through the deployment pipeline, and other metrics such as the length of time since the last change, to assess the risk of the change.

Because the pipeline is used to control the delivery process, it is also the ultimate auditing and compliance tool. Your release management tool, which controls the deployment pipeline, should be able to tell you information like:

- Which version of each of your apps is currently deployed into each of your environments.

- Which parts of the pipeline every version of your app has been through, and what the results were.

- Who approved every build, test and deployment process, which machines they ran on when, the logs, and exactly what the inputs and outputs were.

- Traceability from deployment back to the version in version control that each artifact deployed was ultimately derived from.

- Key process metrics like lead time and cycle time.

If your tool doesn't give you this information, get one that does, such as the one that I manage: Go from ThoughtWorks Studios.

This kind of information is essential as part of the metrics gathering that needs to be performed in order to make go/no-go decisions, do root cause analysis when changes fail, and determine the effectiveness of your change control process as part of continuous improvement.

Leveraging Standard Changes

It's my contention that standard changes should be much more widely used than they are. In particular, all deployments to non-production environments should be standard changes. This isn't common right now for the simple reason that it's often hard to remediate bad deployments. Thus operations people naturally try to erect a barrier which requires a certain level of effort and evidence to surmount before deployments are allowed to happen to environments they control.

Addressing this problem requires a two-pronged approach. First, there must be a sufficient level of automated testing at all levels before a build ever gets towards operations-controlled environments. That means implementing a deployment pipeline which includes unit tests, component tests, and end-to-end acceptance tests (functional and cross-functional) that are run in a production-like environment. Worried that pressing the "deploy" button might break stuff? That means your automated testing and deployment processes are not up to snuff.

Second, it must be easy to remediate a deployment when something goes wrong. In the case of non-production deployments which don't need to be zero-downtime, that means either rolling back or re-provisioning the environment. The more projects I see, the more I am convinced that in most cases the first thing teams need to address to improve their delivery process is the ability to be able to provision a new environment in a fully automated way using a combination of virtualization and data center management tools like Puppet, Chef, BladeLogic, or System Center. Assuming you package up your application, data center management tools can also perform rollbacks by just uninstalling your packages or reinstalling the previous version.

Once all this automation in place, it is possible to kick off a positive feedback cycle. Deployments happen more frequently because they are lower risk, and in turn, the act of practicing deployments more frequently drives out problems earlier in the delivery cycle when they are cheaper to fix, further reducing the risk of deployment.

Conclusion

Continuous delivery and the deployment pipeline allow you to make effective use of the lightweight mechanisms ITIL provides for change management. However this in turn relies on building up trust between the delivery team and the people who are accountable for approving changes on the CAB. Continuous delivery helps with this by ensuring the change process has been exercised and tested many times before the service is made available to users. However as part of a continuous improvement process the change management and delivery processes must be constantly monitored and measured to improve their effectiveness - a process which is in turn enabled by the tight feedback loop that frequent releases provide.

Finally it is important to note that there is of course a cost associated with continuous delivery. Potentially expensive environments must be requisitioned and provisioned much earlier on in the delivery process (although virtualization and cloud computing can significantly reduce these costs). Everybody involved in the service management lifecycle - from customers to developers to operations staff - needs to be involved in development and operation of the service throughout its lifecycle1. This requires high levels of communication and feedback, which inevitably incurs an overhead. While continuous delivery is widely applicable to all kinds of software delivery projects from embedded systems through to software as a service, it is only really appropriate for strategic IT projects.

So here are my recommendations:

- Create a deployment pipeline for your systems as early as possible, including automating build, tests, deployment and infrastructure management, and continue to evolve it as part of continuous improvement of your delivery process.

- Release your service from as early on as possible in its lifecycle - long before it is made available to users. This means having operations personnel involved in projects from the beginning.

- Break down further functionality into a many small, incremental, low-risk changes that are made frequently so as to exercise the change management process. This helps to build up trust between everyone involved in delivery, including the CAB, and provides rapid feedback on the value of each change.

- Ensure that the people required to approve changes are identified early on and kept fully up-to-date on the project plan.

- Make sure everybody knows the risk and the value of each change at the time the work is scheduled, not when you want approval. Short lead times ensure that CAB members won't have time to forget what is coming down the pipeline for their approval.

- Make sure authority for approving changes is delegated as far as possible down into the delivery organization, commensurate of course with the risk of each change.

- Use a just-in-time electronic system for approving changes.

- Use standard changes where appropriate.

- Keep monitoring and improving the change management process so as to improve its efficiency and hence its effectiveness.

What I'm not suggesting is trying to bypass the CAB or get rid of face to face CAB meetings. Face-to-face CAB meetings serve an important purpose, including supervising, monitoring and managing process I describe here. And every change should be approved by the agreed subset of the CAB, who can decide to push back on the change or require that it be considered by a larger group if necessary.

Thanks to Tom Sulston for his feedback and suggestions.

1This in turn suggests that the most effective mechanism for delivering services in this way are cross-functional product teams which are responsible for a service from inception to retirement. If this vision is to be made a reality, it will involve fundamental changes to the way organizations work. from governance structures right down to the grass roots level - a discussion beyond the scope of this article.